In its investigation of the SpaceShipTwo crash, the NTSB blamed engineers at Scaled Composites. NTSB

“The National Transportation Safety Board determines that the probable cause of this accident was Scaled Composites’ failure to consider and protect against the possibility that a single human error could result in a catastrophic hazard to the SpaceShipTwo vehicle. This failure set the stage for the copilot’s premature unlocking of the feather system as a result of time pressure and vibration and loads that he had not recently experienced, which led to uncommanded feather extension and the subsequent aerodynamic overload and inflight breakup of the vehicle.”

Thus is the NTSB’s final report, released in July, on the Oct. 31, 2014, breakup and fatal crash of the Virgin Galactic SpaceShipTwo prototype.

I described the accident, and the design features and decisions that led up to it, in the April issue of Flying. The crux is that the copilot was supposed to perform a certain action when the speed of the climbing vehicle reached Mach 1.4, but no sooner. In fact, he did it at Mach 0.82, and nobody knows why. The first pilot, fortuitously thrown from the disintegrating cockpit at 50,000 feet, survived; the copilot died.

The NTSB chose to find that the cause of the SpaceShipTwo crash was not the inexplicable action of the copilot, but rather the failure of engineers at Scaled Composites, which was developing the spacecraft and about to turn it over to Virgin for further testing to anticipate and forestall it. This strikes me as somewhat unfair. Based on the evidence presented in the 143-page report, the probable cause could equally well have been: “The copilot’s premature unlocking of the feather system, which resulted in loss of control and the breakup of the spacecraft. A contributing factor was the failure of Scaled Composites to identify this risk and to take steps to mitigate it.”

The Board often acts as though a sufficient number of probabilities constitute evidence. In this case it worked backward from the copilot’s inexplicable error to identify noise, G-loads and time pressure as reasons for the mistake. This is sheer speculation, and it’s unexpected that a professional test pilot of suborbital rocket vehicles would be so easily rattled. In fact, the report undermines its own conclusion, stating elsewhere that cockpit video shows little vibration; that there would have been ample time, around 20 seconds, between the firing of the rocket motor and the arrival of Mach 1.4; and that the combined longitudinal and vertical G-loads at the time added up to less than four. Both pilots had rehearsed the flight more than 100 times in the simulator, both had made previous powered flights in the rocket plane (although the copilot’s single powered flight had been more than a year earlier), and both had had acrobatic training flights in an Extra 300 to hone their G-tolerance. According to a close friend of the copilot, he was not feeling anxiety about the flight and was in fact looking forward to it. The only evidence that the copilot was overwhelmed by the cockpit environment is the fact that he made the mistake. The reasoning seems circular.

It was well-known at Scaled that premature release of the feather locks would result in loss of the ship and most likely of the lives of its pilots as well. There was no mystery about it. In SpaceShipOne, the pilot did not touch the feather actuators — a very simple, robust and fully redundant system — until the motor had burned out. It was felt, however, that the heavier, larger and denser SpaceShipTwo would experience “high G-loads, high speeds, flutter, and high heat loads on the vehicle” if it had to make an unfeathered descent after reaching a speed of more than Mach 1.8.

Engineers had thought hard about how to mitigate that risk, and eventually deemed it desirable to verify, well before reaching Mach 1.8, that the locks would release properly. But if the locks were released between Mach 0.8 and a little above Mach 1, the transonic air loads on the stabilizers would overpower the pneumatic actuators holding the tail down — as, in the event, they did. The selection of Mach 1.4 as the lock-release speed split the difference: At supersonic speed the tail loads would be safely downward, and there would still be time to shut down the rocket motor if the locks had failed to release.

The procedural change was made deliberately, not out of recklessness or failure to “consider,” but out of an excess of caution. Its purpose was to add redundancy to a critical system, but it had the unintended consequence of creating a new vulnerability. The NTSB identified the copilot’s required action at Mach 1.4 as a “single point of failure,” a term of art meaning any part of a structure or system for which there is no backup.

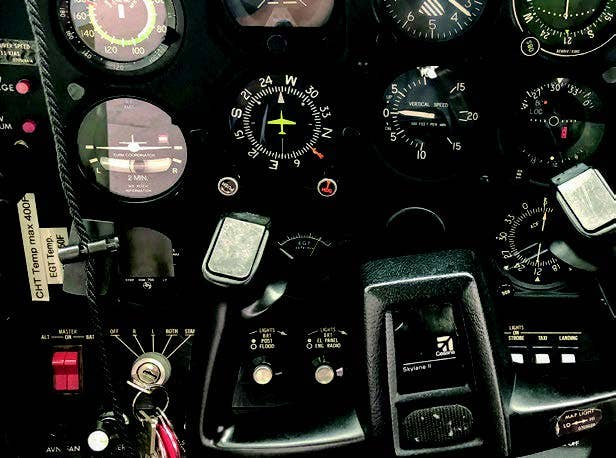

Airplanes have many single points of failure. They are dealt with in different ways. Critical fittings, like single-spar wing-to-fuselage attachments, are designed with larger factors of safety than the rest of the airplane. Critical electronic systems use components of exceptionally high demonstrated reliability. But there is one point of failure for which there is frequently no backup: the pilot.

Most airplanes can be destroyed in flight at the whim of their pilot. If there are two pilots, mistakes can often be averted; but in this case the first pilot’s attention was so completely absorbed by the pitch guidance he later reported that he never even saw the copilot reach forward for the feather-lock handles. His action was caught by a video camera in the cockpit, however, and transmitted to the control room on the ground.

The only reason this particular action is identified as a single point of failure is that this was the action that caused the failure, but there were countless other actions that could have been fatal if performed incorrectly or omitted. They did not require special briefings and backups. If the maneuvering speed is 200 knots, you do not pull up sharply at 300 knots. You don’t spin a Gulfstream. These things go without saying.

Scaled could have built a safety lock into the feather-locking handle to prevent operating it while the spacecraft was in the transonic speed range. Indeed, Virgin is now doing so, though it is highly unlikely that this particular mistake would ever be repeated. But Scaled’s engineers would have considered that precaution no more necessary than a lock to prevent lowering the landing gear during the boost phase, or a Velcro strap to keep the pilot from taking his hands off the stick and sitting on them.

The report scrutinizes the interactions between Scaled and the FAA and finds fault here and there. Although during the early stages of SpaceShipOne development, Burt Rutan, a committed libertarian on his own behalf, railed against having to prove to the FAA that no desert tortoise was liable to be harmed by his activities, relations between Scaled and the FAA in later years have been pretty smooth. Scaled’s engineers are of a high order; they possess talent, pride and integrity, and the FAA has generally been willing to trust them to assess and mitigate risks without excessive report writing and verification. The NTSB thinks that if all bureaucratic procedures had been followed to the letter, in other words, if the FAA had been more intrusive and more paper had been shuffled, someone along the way would have anticipated that the copilot might prematurely release the locks and demanded that a protective system be added. Maybe so — and maybe not.

Bureaucracy has a place, but in this instance it’s doubtful that it could have made much difference. As a friend of mine wrote, reflecting on this report, “the only tool [bureaucrats] have is process, so they like to cling to the hope that process can solve the problem. But process only catches the most obvious risks. The rest is up to the engagement and imagination of the [engineering] team itself, and then there is always going to be that occasional stray synapse in a pilot’s head that nobody could predict.”

Subscribe to Our Newsletter

Get the latest FLYING stories delivered directly to your inbox